Interaural Intensity/Level Differences (IID / ILD)

|

|

For high frequency sound signals, >~1,500Hz,

with

wavelengths <~0.23m/cycle (<~1/2 of the average head's half circumference (i.e.

~0.28m),

auditory localization judgments are based mainly on interaural intensity/level differences

(IIDs or ILDs) .

Frequencies >~1,500Hz cannot diffract around a listener's

head, which blocks acoustic energy enough to produce interpretable intensity level

differences.

The higher the frequency the larger the portion of the

signal energy that will be blocked by the

head, producing increasingly stronger and more salient interaural intensity

differences.

|

For frequencies below 500Hz, IIDs are negligible (why?) and increase gradually with frequency.

For frequencies >1,500Hz, IIDs change systematically with azimuth changes and

provide reliable localization cues on the horizontal plane, except for

front-to-back confusion, where IID=0

(why?).

|

Artificial IIDs

(i.e. imposed through

headphones and not due to sound-source positioning relative to the head)

can provide localization cues at all frequencies.

Example:

Three

successive 300Hz tones are presented in stereo (must use

headphones)

_In the first, the

intensity level is higher on the left channel

(by 7dB);

_In the second, the level is the same across

channels;

_In the third, the

level is higher on the right channel

(by 7dB).

This arrangement results in signal lateralization that appears to move from

the left, to the middle, to the right, following the signals' IID.

Audio engineers often use

artificial IIDs to simulate different sound source positions.

This practice may introduce complications

in outdoor, live performance settings, where audiences

listen through left and right loudspeaker arrays positioned

far (>25ft) apart.

One such complication is related to a

perceptual strategy developed to address auditory localization

ambiguities when listening in reflective environments. The manifestation of

this strategy (referred to as "precedence effect") is addressed at

the end of the module.

|

|

Interaural Time/Phase Differences (ITD / IPD) |

For low frequency sound

signals (e.g. <~500Hz, with

wavelengths >~0.69m, equivalent to > twice the average head's

half circumference

of ~0.28m),

auditory localization judgments are based mainly on single-cycle interaural time differences (ITDs), equivalent to

interaural phase differences (IPDs).

The minimum detectable interaural time

difference is 10microseconds or 0.000010s, corresponding to a shift

in sound source location by 10 in azimuth relative to

straight ahead (consistent with the minimum audible angle).

Explanation: Low frequency signals

with wavelengths that are large enough --compared to the average

listener's head-- to diffract efficiently, do not produce perceptible interaural level differences.

However, they do reach the two ears

at different times. For frequencies <500Hz, for example, the interaural time difference

is shorter than the signal's period and the entire head fits within

< half a wavelength. Consequently, at low frequencies, sound-source location produces unambiguous

and interpretable interaural phase differences or IPDs (save for

locations on the median plane, where IPD=0), because sounds will

arrive at each ear at a different phase within the same cycle.

The maximum possible interaural

time difference that can occur due to sonic energy travelling

around the head is ~0.0008s (assuming an average head half

circumference of ~0.28m and speed of sound = 345m/s).

For a sine signal to reliably take advantage of IPD cues, its

period must be >1.5 times this value

(>0.0012) so that the entire head can fit within <2/3 of

a cycle. IPDs are therefore reliable localization

cues for frequencies <700-800Hz. The highest frequency for which IPDs provide any cues is

~1,500Hz.

IPDs also provide useful

localization cues for

complex signals whose spectrum includes components with

frequencies <~750Hz.

For complex signals with no low-frequency content (or for

amplitude-modulated high-frequency-content

signals), IPDs remain

useful as long as several of the frequency components are

separated by (or as long as the modulation rate is) <~750Hz. In such cases,

the complex signal's

envelope (in Plack, 2005:178) will display amplitude fluctuations at rates <~750Hz and

the IPDs between the signal envelopes arriving in each ear will

provide interpretable localization cues.

At higher frequencies,

single-cycle IPDs do not provide useful localization cues

because they depend not only on sound source

location on the horizontal & median planes but also on frequency and,

most importantly, distance. Different sound source locations can

result in the same IPDs, while the same angular location in the localization

coordinate system may result in different IPDs, depending on distance.

|

In Plack, 2005: 176 |

Strongest separation in lateralization (i.e.

in virtual location inside the head) occurs

for IPDs = 1/4 cycle, corresponding to signals with:

Period T ~0.0032s/cycle (= 4 x "longest possible ITD" = 4 x 0.0008s) and

Frequency f ~315Hz (= 1/T = 1/0.0032).

Example:

Three

successive 300Hz tones are presented in stereo (must use

headphones - no onset difference)

_In the

first, the left channel

leads by 1/4 cycle;

_In the

second, the phase is the same across channels;

_In the third, the right channel leads by 1/4

cycle.

This arrangement results in signal lateralization that appears to move from

the left, to the middle, to the right, following the signals' IPD.

Listen to three stereo signals with the same phase relationships, as

above, at

100Hz and

8000Hz.

What types of lateralization do they result

in? Do you still get a clear sense

of motion?

Interaural phase difference of

exactly 1/2

cycle (1800) results in a wider stereo image rather than

in lateralization changes.

Example:

Two

successive 300Hz tones are presented in stereo

(must use headphones - no onset difference)

_In the first, both channels are in phase (i.e.

IPD = 00);

_In the second, there is a 1/2 cycle phase

difference

between channels (i.e. IPD = 1800).

|

|

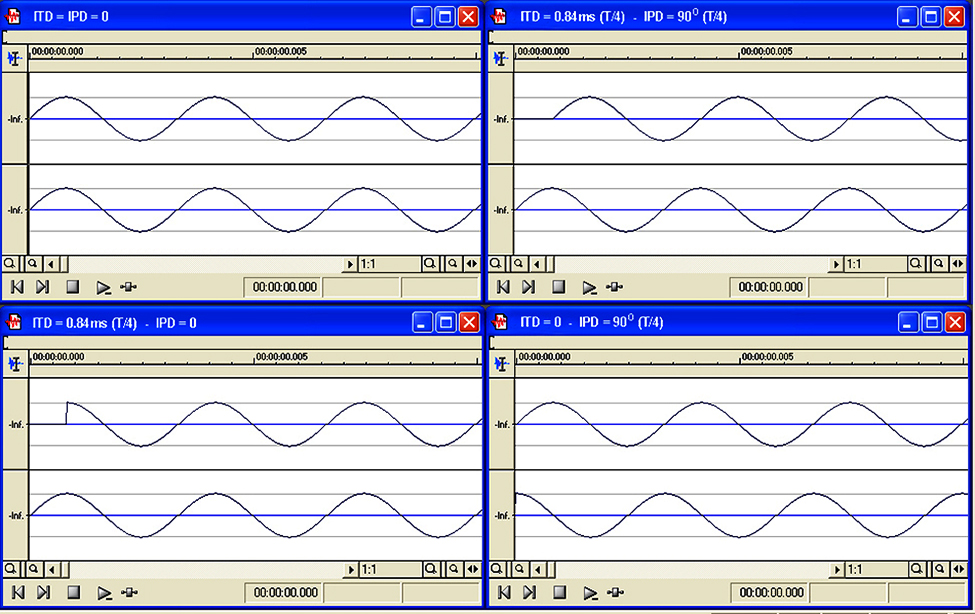

Interaural Time Difference (ITD)

vs. Interaural Phase Difference (IPD)

(must listen over

headphones)

* When IPD=0, the signal appears to come from the

center, regardless of whether

ITD=0 (top-left

graph) or

ITD≠0 (bottom-left

graph).

*

When IPD≠0, the signal appears to come from one side,

regardless of whether

ITD≠0 (top-right

graph) or

ITD=0

(bottom-right

graph)

In other words, apparent location of the sound source is

determined by the IPD rather than the ITD values.

ITDs

are relevant only in terms of the IPD values they impose.

|

INTERLUDE:

DICHOTIC BEATS

|

The phenomenon of

dichotic beats (often

inaccurately referred to as 'binaural beats') describes a

beating-like sensation arising when two signals with

slightly different frequencies are presented dichoticaly (i.e. one per

ear through headphones).

Contrary to the beating

sensations that accompany signals with amplitude

fluctuation rates <~15 fluctuations/sec.,

dichotic beats are not the result of periodic alterations

between constructive and destructive interference; dichotic

presentation does not permit physical interaction. Rather, they are the result of periodic

changes in IPDs and a direct

manifestation of our ability to detect the systematic IPD changes that, for low

frequencies, accompany sound-source motion. So,

the sensation of dichotic beats is based on our hearing mechanism's use of

static IPDs as sound-source localization cues and of dynamic IPDs as

sound-source

motion detection cues, at low frequencies.

Dichotic/binaural beats have acquired cult status,

with hundreds of websites and videos linking them to

a variety of

mental effects.

The apparent fascination is partially due to the disorienting

sensation elicited by this unnatural form of listening (dichotic

listening) and partially due to the coincidence between the most

salient dichotic beat rates and the frequencies of

some brain-waves (e.g. Theta and Delta

brain-waves).

For very small frequency differences (<~4Hz) between ears, the

resulting IPD modulations give rise to a "rotating" sensation inside the

head that can be easily identified as such (e.g.

Channel 1: 250Hz & Channel 2: 250.5Hz).

For larger frequency differences, (>~4Hz), the sensation does resemble

the loudness fluctuations (beating) that would result if

the tones in each ear were allowed to interfere, because the rotation

rate is faster than the hearing mechanism's ability to

follow it. However, this beating-like sensation is much less

pronounced than it would be if actual

interference had taken place (e.g.

Channel 1: 250Hz & Channel 2: 257Hz -

listen via headphones and via loudspeakers;

is there a perceptual difference?).

Dichotic and

interference-based beating sensations are manifestations of different physical, physiological, and perceptual phenomena.

(For additional information see

here

and

here)

|

Monaural

& Interaural Spectral Differences - HRTF / ATF

|

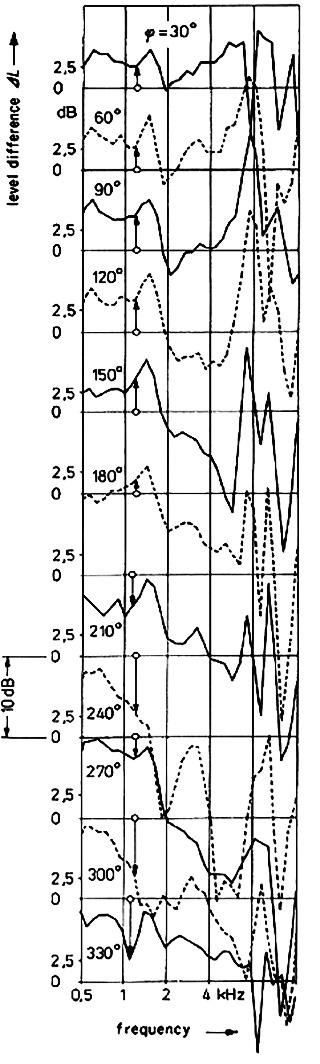

For sound

signals of intermediate frequencies (~ 500Hz<f<~1,500Hz), IID

and IPD cues do provide some useful

localization information, but only in the azimuth, and only if combined (IID

cues are perceivable down to ~500Hz and IPD cues are perceivable

up to ~1,500Hz).

For such frequencies, where IID and IPD cues are

ambiguous/unreliable in the azimuth, as well as for all frequencies in the case of sound-source elevation changes,

where IID=IPD=0, the auditory system relies on

monaural spectral cues and interaural

spectral difference cues.

Monaural spectral cues and interaural

spectral difference cues are due to the torso, head, and outer ear

performing azimuth- and, most importantly, elevation-dependent spectral

filtering on signals. This filtering 'colors' the spectral

composition of the signal arriving in each ear differently, depending on

sound-source location.

It is commonly referred to as Head Related Transfer Function or HRTF.

The more accurate Anatomic Transfer

Function or ATF captures the contribution

of parts of the body other than the head but is less

commonly used.

As is the case with most

sound source localization

cues, spectral cues are not foolproof. For example, in

the median plane, signals rich in high frequencies tend to be localized

higher than signals rich in low frequencies, even when the source

elevation remains the same.

At frequencies <~200Hz,

whose wavelengths

are larger than the dimensions of the structures involved

(head, torso, pinnae), interaural spectral differences and the associated HRTFs

do not provide useful localization cues at any orientation.

|

Interaural spectral differences

due specifically to structural differences between the pinnae

of the two ears contribute to better lateralization (i.e. more accurate

virtual location of the sound within the head), especially in

the elevation plane. Pinna-related

spectral filtering alone does not help us 'construct' a complete aural 'image'

of the outside world.

Experiments that apply HRTFs (ATFs) obtained from

dummy heads/torsos on the equalization and reproduction of

signals indicate that interaural spectral differences

due to the

entire torso/head/pinnae system contain information that helps us

perceive and reconstruct the actual

source location

outside the head.

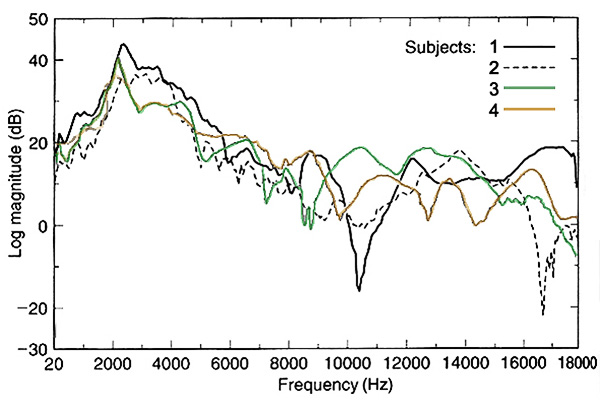

Head Related Transfer

Function (HRTF) is defined as the ratio of the sound

pressure spectrum measured at the eardrum to the sound

pressure spectrum that would exist at the same location

(i.e. at the eardrum or the center of the head) if the

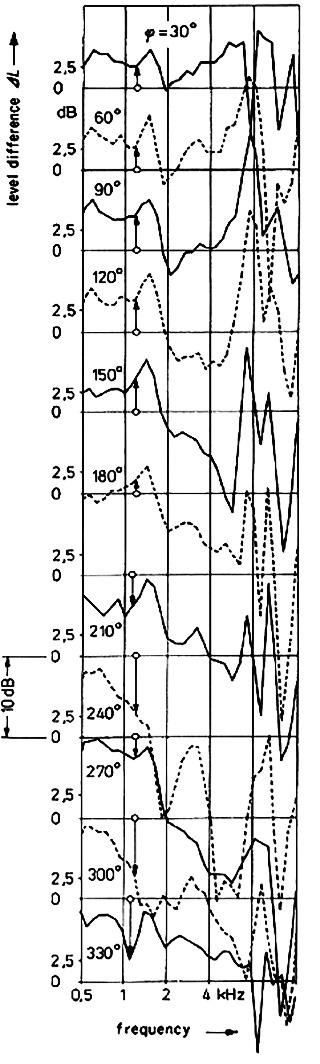

listener was removed. The figure to the right displays HRTFs as a function of sound-source

elevation

angle. From this and other similar

datasets it has been inferred

that:

� The 8kHz region seems to correlate with overhead

perception (i.e. spectral changes in this region correlate with changes

in the perceived location of sound sources above our heads)

� Regions in the frequency bands 300-600Hz & 3000-6000Hz seem to correlate

with

frontal perception

� Regions centered at around 1200Hz & 12000Hz seem to correlate with rear

perception.

HRTFs are personalized, as they

depend on variable pinna, head, and torso

construction among individuals (e.g. data in the figure, below).

Consequently, spectral sound-source localization cues and the associated HRTFs are most likely learned through

experience, with individuals generally localizing better with

their own cues than with those of others.

At the same time, it has been

shown that physiological differences may result in some

listeners performing much better than others on auditory

localization tasks.

Imposing 'good' HRTFs (through

appropriately equalized headphone listening) on individuals who

have difficulty localizing sound sources can improve their localization

performance by providing more salient interaural spectral

differences, assuming comparable head size between 'donor' and

'recipient' and sufficient learning time.

|

|

Experimental explorations of HRTFs/ATFs use

specially-designed binaural heads (e.g.

KEMAR,

by G.R.A.S. Sound & Vibration, Denmark) to record signals at

various positions in their path to the ear drum and tease

out the various anatomical spectral-filtering contributions.

|

Binaural Cues & "Release from Masking"

|

IID

and IPD cues also help us perceive tones that would have otherwise been masked. The following

listening examples illustrate this point and correspond to the three scenarios described in the

figure to the right (must use headphones).

(A)

Example 1: a 300Hz sine signal with no IIDs

or IPDs is presented along

with a 600Hz-wide noise band, centered at 300Hz. Due to the level difference

between signal and noise (the signal is 15dB below the noise) the sine tone is masked.

(B)

Example 2: same as in (A) but with a 1800 IPD for the sine signal.

In spite of the level difference between noise and signal, the

sine tone is now perceivable.

(C)

Example 3: same as in (A) but with the left channel of the sine signal

removed (extreme case of IID for the sine signal). In spite of the

now increased level

difference between noise and signal, the sine tone is again perceivable.

In other words, a masked tone

can become audible by reducing its overall level.

[ signals

used for the audio examples:

Noise /

300Hz /

300Hz 1800 IPD /

300Hz no left channel ]

IPDs (for low

frequencies) and IIDs (for high frequencies) can reduce a signal's

detection threshold by up to ~15dB. The effect is less

salient for "IPD & high frequencies" and IIDs & low

frequencies" contexts (why?).

The release from masking of complex

signals is facilitated further by interaural spectral

differences.

The described release from

masking is not due to our ability to localize a signal thanks to the imposed

interaural differences. Rather, it is due to signal de-correlation between ears, supported by the

interaural changes and supporting the employment of cognitive

strategies (e.g. attention focusing) for signal detection

in complex sonic environments.

|

(in Plack, 2005: 180) |

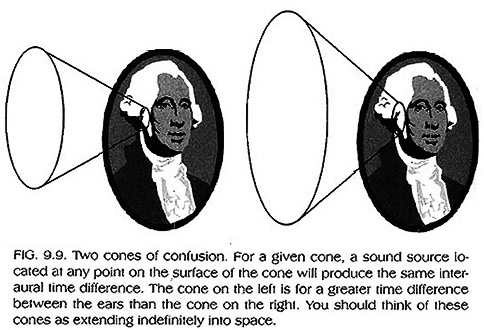

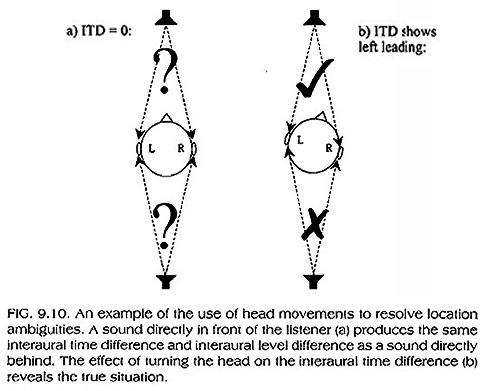

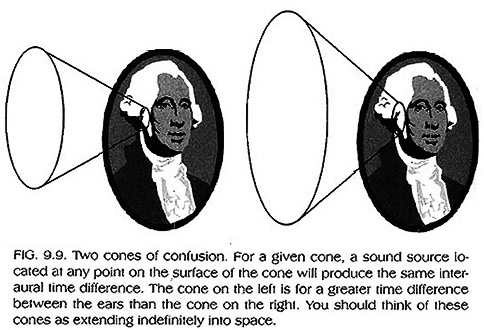

Cone of

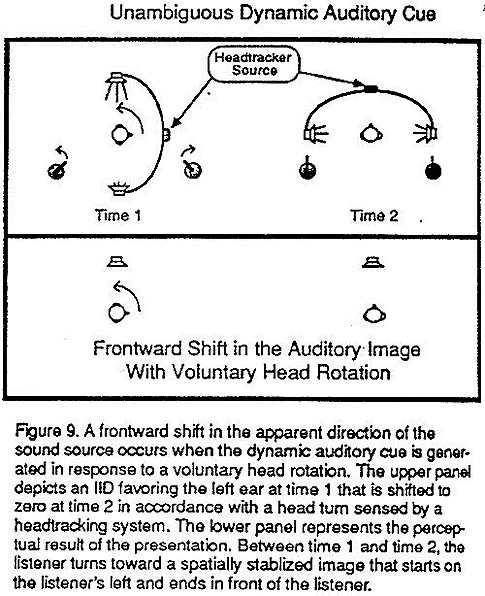

Confusion - Head-Movement Localization Cues

|

Cone of confusion

Sound sources moving on a sagittal plane

(i.e. changing in elevation) produce no IID or IPD changes and

only moderate interaural spectral difference changes.

Consequently, their movement is difficult to track by purely

auditory means. More generally, for any given IID or IPD value,

there will be a conical surface extending out of the ear that will

produce identical IIDs and IPDs, hindering sound source

localization over the surface (see to the right).

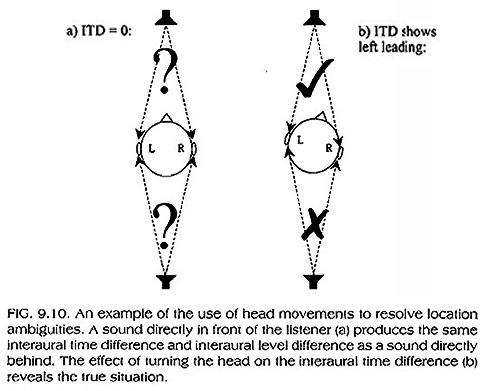

The most effective strategy in

resolving sound-source localization ambiguities is head movement,

assuming the sound signal lasts long enough, unchanged, to allow for

such a movement to be of use. Moving the head in the horizontal plane

can help resolve front-to-back ambiguities (e.g. see below-left), while head tilting can help resolve top-to-bottom

ambiguities.

Interaural

spectral differences and the associated HRTFs do help resolve

localization ambiguities, whether in the median sagittal plane or within

any cone of

confusion, but only partially.

As is the case with most sound-source

localization cues, the salience of head-movement cues depends largely upon long-term

learning and experience.

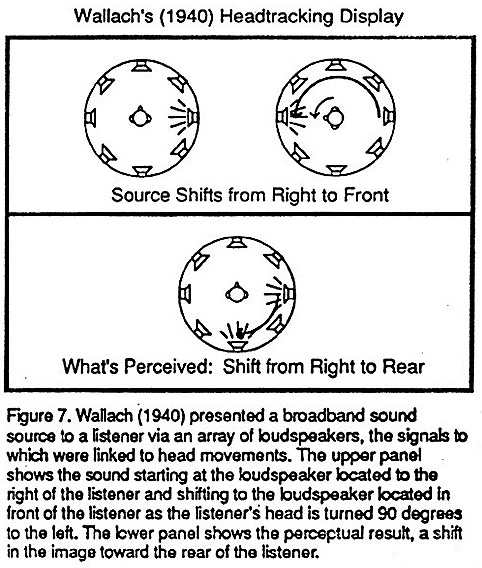

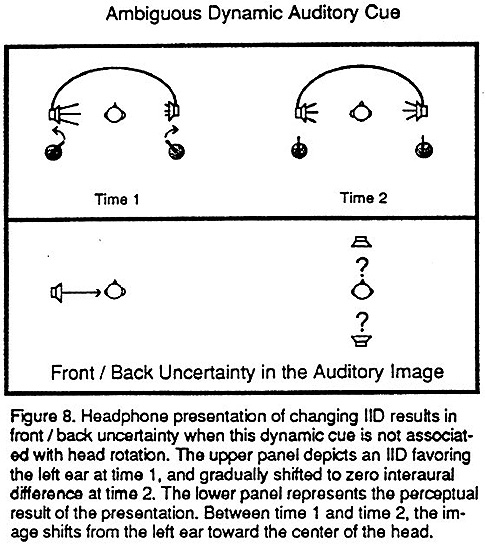

Auditory localization experiments using

headphones and conflicting source and head

movements exploit our

reliance on previous experience, resulting in revealing

illusions.

Explore the figures, below, and read

the explanations in the captions.

|

(in Plack, 2005: 184) |

(in Plack, 2005: 185) |

|

|

|

|

Judging Sound-Source Distance

|

Distance and loudness

Judging sound-source distance can be partially aided by loudness cues. In general, softer sounds are more likely to be associated with sources farther

away and louder sounds with sources

nearer.

Loudness cues are only reliable when

comparing the loudness of a sound to a known reference that precedes it

and/or when judging familiar sounds. In all other cases, loudness

cues cannot reliably support distance judgments.

Even in reliable contexts,

distance changes are underestimated when

judged based solely on loudness changes.

More specifically,

although distance doubling in the free field (i.e. where there are no

reflections) corresponds to an ~6dB SPL reduction, listeners require

an ~20dB SPL reduction in order to report that their distance to a

sound-source has doubled.

Distance and reverberation

In reflective environments, distance

judgments are aided by reverberation cues. In general, the greater the distance of the source the greater

the proportion of the reverberant (relative to the direct) sound.

Direct-to-reverberant-sound cues

provide coarse source-distance information and only become perceptible

for distance changes by a factor of two or larger. In

addition, distance-change judgments based on this cue alone tend, again,

to be underestimated.

Distance and spectral composition (timbre)

Changes in sound-source

distance are linked to timbral changes, mainly due to corresponding

changes in a sound's high-to-low frequency SPL ratio. In general, increasing the distance from a sound source

tends to reduce this ratio because air absorption reduces high frequencies

far more than low frequencies. This cue is most perceptible for large changes in distance.

In addition, the increased likelihood

of higher frequencies to be blocked by obstacles and of lower

frequencies to diffract around them further reduces a sound's

high-to-low frequency energy ratio with distance. Absorption (by

air) and blocking (by obstacles) of high frequency can therefore

explain the observation that low frequencies travel much further than high frequencies and, at very large distances, the level of high frequencies drops to 0.

NOTE

In the absence of obstacles and for short-to-middle distances

(~20-40m from a source, where air absorption is negligible), all

frequencies lose ~6dB SPL for each doubling in distance (why?)

and the high-to-low frequency SPL ratio remains fixed.

However:

A given change in dB corresponds to a larger loudness change

at low vs. high frequencies, as can be inferred from the

equal loudness contours (why?).

In the above scenario, increasing the distance from a source

will therefore reduce the loudness of low frequencies more than that

of high frequencies, even though the SPL of both frequency

ranges will be reduced by the same amount. In other words,

while the SPL ratio of high-to-low frequencies remains

fixed, their loudness ratio increases.

|

|

Sound-source distance and overall

location judgments based solely on aural cues are not precise and

require a combination of experience/familiarity with any given sound source

and with changes in context, loudness, reverberation, timbral,

and visual cues that may accompany changes in sound source location.

This is one of the reasons why,

for example, AI implementations of sound source localization cues to

self-driving vehicles had, until recently, failed. The most promising

approaches, based on neural networks and deep learning, employ the discussed sound-source

localization cues differently than human listeners.

See for example these studies:

_

Three-Dimensional Sound Source Localization for Unmanned Ground

Vehicles with a Self-Rotational Two-Microphone Array

_

Sound Source Localization of Cars at Intersections Based on Deep Learning

|

The Precedence Effect

|

The precedence effect describes a learned strategy employed implicitly

by listeners in order to address conflicting or ambiguous localization

cues occurring in environments where sound wave reflections play an

important role (e.g. all rooms other than anechoic environments).

This strategy is believed to develop through exposure to reverberant

listening contexts and is eventually applied automatically to all

listening contexts, leading to possible auditory localization

"illusions."

According to this effect, listeners

make their sound source localization judgments based on the earliest arriving

sound onset. The term "precedence" is used to indicate that the

direct sound, with presumably accurate localization information, is

given precedence over the subsequent reflections and reverberation,

which convey inaccurate localization information and blur the

sources aural "image." In fact,

in reflective/reverberant environments, both IPD and ILD cues may be

diffused to such an extent that they become unusable, making the

precedence effect a necessary localization strategy.

|

|

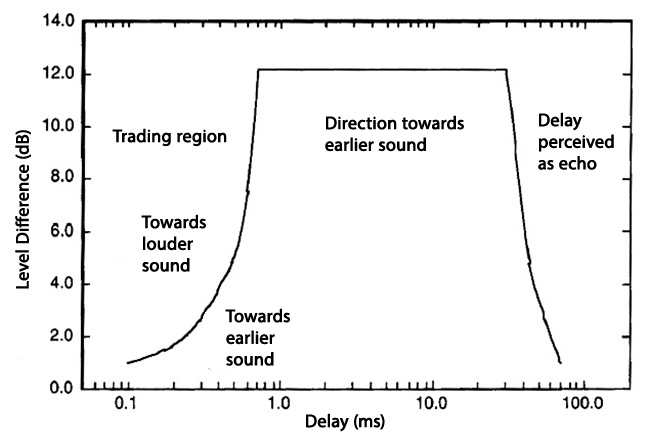

The Haas effect (named after mid

20th century German psychoacoustician,

Helmut Haas), describes the observation that, for

arrival-times differences up to 30�40 milliseconds, the precedence

effect persists (i.e. listeners localize the sound source based

on the earlier arriving signal) even if the delayed signal is up to 12dB

stronger than the first (see the image, below, and

watch this

demonstration).

The Franssen effect (named after

mid 20th century Dutch physicist and inventor, Nico V. Franssen) describes the observation that, an intense,

fast-rising tone presented in a reverberant space via a single

speaker will continue to be perceived as originating from that

speaker even after it has gradually moved to a second loudspeaker,

45 degrees away.

The figure to the left illustrates a precedence

effect demonstration with two loudspeakers reproducing the same pulsed wave.

The pulse from the left speaker leads in the left ear by a few

microseconds, suggesting that the source is on the left. The pulse from the

right speaker leads in the right ear by a similar amount, which provides a

contradictory localization cue. Because the listener is closer to the left

speaker, the left pulse arrives sooner and wins the competition�the listener

perceives just a single pulse coming from the left.

From

"How we Localize

Sound" by American physicist, W. M. Hartmann; standard reference resource on

the topic of sound source localization.

[ Optional:

Watch Prof. Hartmann's 2018 presentation on the

state of sound source localization research at McGill University's

Center for

Interdisciplinary Research in Music Media and Technology. ]

|

|

The

figure, below, illustrates the interaction between interaural time & intensity differences, observed in experiments exploring the precedence effect.

-

For small delays (<~1ms) between ears (i.e. small differences

in the distance between a sound source and each ear), localization is biased towards the side producing the

louder sound.

-

For delays between 1-40ms, localization is biased

towards the

earlier sound.

-

For larger delays we hear two sounds, with the second

perceived as an echo of the first, even if the 'echo' is stronger than the

original.

[Optional:

Brown et. al, 2015. The Precedence Effect in Sound

Localization.] |

|

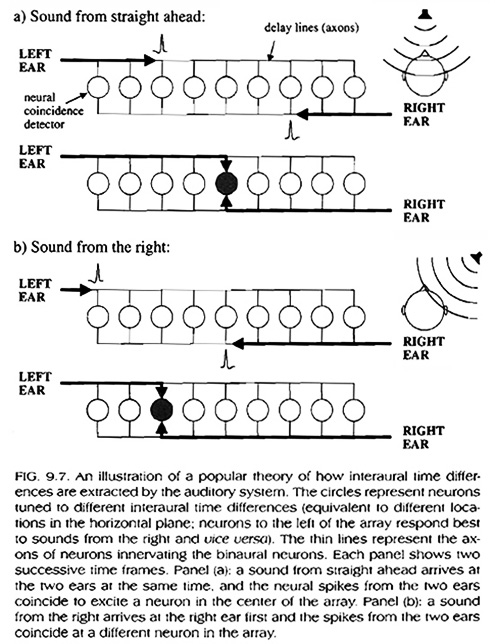

OPTIONAL: Sound source localization neural mechanisms

|

|

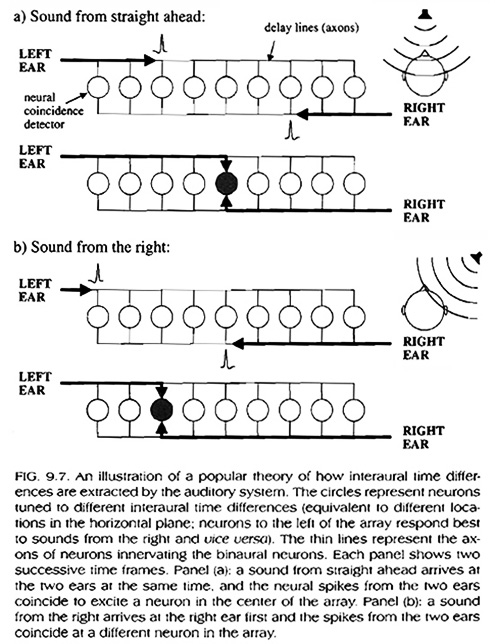

To explain ITD (IPD) detection, America psychologist,

Lloyd Jeffress hypothesized the presence of a coincidence detector, at the

neural level, that uses delay lines to compare arrival times at

each ear. His theory is illustrated in the figure,

below left (Jeffress, 1948, in Plack, 2005: 182).

Assuming the presence of neuron arrays, which are tuned to

different delays and encode signal arrival time differences

between ears, the Jeffress model is equivalent to cross-correlation, comparing the inputs from each ear at

different time delays [

video

explanation ].

|

|

Jeffress's model has been partially confirmed by

physiological evidence from birds (e.g.

barn owl).

However, evidence from mammals (e.g. gerbil)

suggest broad sensitivity to interaural time

differences per characteristic frequency that corresponding to the period of that frequency

(see above; in Plack, 2005: 183).

This

observation fits to the already discussed observations

that

a) ITDs do not provide sound source localization at high

frequencies

(the Jeffress model cannot explain this) and

b) it

is a very special type of ITDs that is of importance: IPDs

(only up to 1/2-cycle IPDs are well represented by the gerbil

data).

[Advanced review in

Ashida & Carr, 2011]

|

IID detection can be explained

in terms of the associated difference between excitatory and

inhibitory activity in the two ears when stimulated by signals

that display IIDs (see

Park et al., 1996).

Explore this list of links to relevant publications by Prof. W. Hartmann

and his colleagues (Michigan State University).

|

Loyola Marymount University - School of Film &

Television